You hit download. You're ready to go. Anthropic says nope.

That's how it played out for a lot of people who wanted to try Claude Cowork, only to find that the app either didn't start or threw an error the moment it noticed an Intel chip on the Mac or a Home edition on the Windows box. Complaints have been piling up on GitHub Issue #20787 for weeks, and Jason Resnick has spoken publicly about the same frustration.

Cowork is aimed at knowledge workers, but it only runs on hardware that a good chunk of those knowledge workers don't actually have on their desks.

So I sat down with five alternatives that deliver on the Cowork promise without forcing you to swap your Mac or your Windows setup. You'll get platform tables, honest pricing notes, and a clear pick at the end for which alternative fits which case.

- Claude Cowork only runs on Apple Silicon and Windows 11 Pro with Hyper-V. Intel Macs and Windows 11 Home are locked out.

- Best alternative for most people: Claude Code by Anthropic. Runs on any hardware, ships with the Pro plan from $20/month, and has no geo-restrictions.

- Open-source picks like OpenCode and OpenWork are free for the tool itself, but you'll still need an API key with a model provider and a bit of tech comfort.

Why Claude Cowork Doesn't Run for Many People

Claude Cowork is, technically speaking, a tiger in a cage. Anthropic locked the tool inside a Linux sandbox to prevent an AI agent from accidentally trashing your live system. That part makes sense. The problem is that the cage needs ARM64 hardware or, on Windows, the Hyper-V platform. I broke down the full architecture in Claude Code vs. Claude Cowork.

Apple Silicon meets that bar without breaking a sweat. Intel Macs don't. Windows 11 Pro ships Hyper-V. Windows 11 Home doesn't ship it at all. And Linux users are left empty-handed, because there's no official Linux build.

The result:

A big chunk of Cowork's actual target audience, knowledge workers, marketers, sales pros, HR teams, and finance specialists, simply can't install the app. Stephen Detomasi summed it up in the same GitHub Issue #20787. If you don't match the hardware wishlist, you're out.

That's the gap every alternative is trying to fill. Some better, some worse, all with their own trade-offs. Here are the five I tested.

What I Looked At for Each Alternative

To keep this from being pure gut feeling, I locked in a few criteria up front, picked specifically for the Cowork audience:

- Platforms. Does the tool run on macOS (Apple Silicon and Intel), Windows 11 (Pro and Home), and ideally Linux too?

- Feature set. Code execution, file management, and browser control are non-negotiable, because those are Cowork's core use cases.

- Honest pricing. What does the tool plus model API cost together, and what limits hide in the fine print?

- Audience. Who is the tool really built for? Click-and-go for non-coders or a power-user tool? That belongs on the table.

- Regional usability. Geo-restrictions, missing EU hosting options, or privacy risks are called out clearly.

I ran each alternative for a few days on real tasks I'd normally throw at Cowork. Results coming up.

1. Claude Code

What It Is

Claude Code is Anthropic's CLI for developers and ambitious knowledge workers. It runs straight on your operating system, has full file system access, hooks into VS Code, JetBrains, or your terminal, and uses the same Claude model as Cowork. If Cowork is the polished city car, Claude Code is the off-road truck with proper four-wheel drive.

Platforms

macOS Apple Silicon, macOS Intel, Windows 11 Pro, Windows 11 Home, Linux. You install it via npm and it runs. Period.

Feature Set

This is where Claude Code really shines: skills, subagents, hooks, a native plugin marketplace (over 1,000 plugins and more than 4,000 skills as of April 2026), MCP support with OAuth 2.0, memory through CLAUDE.md plus a dedicated memory tool, and plan mode for structured tasks. Subagents let you define a dedicated worker per task type, and plugins bundle skills, hooks, and MCP servers into installable packages.

Models

By default Claude Code runs on Anthropic's current line: Sonnet 4.6 as the primary model, Haiku 4.5 for fast tasks, and Opus 4.7 for heavier reasoning workflows. Unlike Cowork, Claude Code also lets you plug into third-party platforms. With Google Vertex AI or Amazon Bedrock, you can run Claude on your own cloud account, which often matters for GDPR-conscious setups. Through gateways like LiteLLM or Bifrost, even GPT-5, Gemini, Groq, or local Ollama models can run inside the Claude Code interface. The Anthropic-native paths are the officially recommended ones, though.

Pricing

Included in the Claude Pro plan from $20/month. If you need bigger token budgets, the Max plan covers it. There's no separate tool fee, since the tool and the model come from the same shop.

Who is it for?

For power users and developers who want to push Claude beyond what the Cowork sandbox allows. Also for ambitious knowledge workers who don't mind making friends with the terminal, Git, and config files. If you've never opened a terminal, plan a weekend for the basics.

My Take

If you're already on Claude Pro and you have an Intel Mac or Windows 11 Home, Claude Code is the most painless Cowork alternative out there. You actually get more features, not fewer.

The one catch:

You'll need to get comfortable in the terminal. Pull that off, and you're holding the strongest tool in this whole comparison.

- Runs on macOS, Windows, and Linux without hardware hurdles

- Included in Claude Pro from $20/month at no extra cost

- More reach than Cowork: full file system access, IDE integration, Git workflows

- Built by Anthropic, uses the same Claude model

2. Codex Desktop

What It Is

Codex Desktop is OpenAI's attempt at a Cowork-style experience with a graphical interface, Computer Use, and multi-model support. Install the app, sign in with your ChatGPT account, and start. Sounds great on paper.

If the geo-block weren't a thing.

Platforms

The Codex app itself runs on macOS Apple Silicon, macOS Intel, and Windows. A Linux version is officially announced but has no firm date. Watch out for Computer Use specifically: since the April 16, 2026 update, it's macOS-only at launch and got geo-blocked in the EEA, the UK, and Switzerland on day one. So in those regions, you get the app, but you don't get the Cowork-style feature.

Feature Set

Since the April 2026 update, Codex ships a real plugin layer with 90+ app connectors (Atlassian Rovo, Box, Figma, GitLab, Linear, Notion, Sentry, Slack, the Microsoft suite, and more). Plugins bundle skills, MCP servers, and app integrations. On top of that you get memory (preview, blocked in the EU), multi-tab sessions, an in-app browser, image generation via gpt-image-1.5, and a scheduling layer that lets an agent resume work days later.

Models

OpenAI recommends GPT-5.5 as the default, with GPT-5.4 as the fallback if 5.5 isn't available in your account yet. ChatGPT Pro subscribers also get GPT-5.3-Codex-Spark as a research preview, optimized for extra-fast coding answers. The `/model` slash command lets you switch models mid-workflow. Anthropic, Google, or other providers aren't supported. You're on the OpenAI stack.

Pricing

Bundled with ChatGPT Plus from $20/month, putting it on price parity with Claude Pro. Need more volume? Bump up to the Pro tier at $200/month or move to API pricing directly.

Who is it for?

For GUI-friendly users outside the EU/UK/Switzerland who already live in the OpenAI stack and want a click-and-go tool for coding plus browser automation. For European users it's only a partial fit since Computer Use is missing. Some tech basics around file paths and workspace config still help.

My Take

If you're outside Europe or you don't strictly need Computer Use, Codex Desktop is a solid Cowork alternative with a decent GUI. If you're in the EU, UK, or Switzerland, I'd hold off until OpenAI lifts the geo-block.

- Runs on macOS (Apple Silicon and Intel) and Windows 11 (Pro and Home), Linux to follow

- GUI makes the tool friendlier than pure terminal solutions

- Included in ChatGPT Plus from $20/month

- Uses current GPT models, including the coding variants

3. OpenCode

What It Is

OpenCode is an open-source CLI that picks up the Claude Code spirit without locking you to a single model provider. You grab the code from github.com/anomalyco/opencode, install the binary, and drop in your API key for Anthropic, OpenAI, or another provider. From there, you work with whichever model you prefer.

I set up OpenCode on a Sunday morning, and 40 minutes later my first workflow was hitting the Anthropic API. Not exactly plug and play, but nothing close to rocket science either.

Platforms

macOS Apple Silicon, macOS Intel, Windows 11 Pro, Windows 11 Home, and Linux. That puts OpenCode right alongside Claude Code as the most platform-friendly tool in this lineup.

Feature Set

OpenCode ships skills, a hook system, and full MCP support (local and remote servers, OAuth 2.0). Its real strength is provider choice: switch freely between OpenAI, Anthropic, Google, Groq, Cerebras, DeepSeek, Mistral, Perplexity, xAI, and local models via Ollama or LM Studio. Plugins are wired in through the config file. There's no central marketplace.

Models

This is where OpenCode is unmatched: 75+ model providers are wired in directly. The big ones use native endpoints (Anthropic Claude, OpenAI GPT, Google Gemini and Vertex, AWS Bedrock, Azure OpenAI), the specialists have their own adapters (Groq, Together AI, Cerebras, DeepSeek, Mistral, Perplexity, xAI). Local models run through Ollama, llama.cpp, or LM Studio, which makes privacy-sensitive setups possible. You can pick a different model per task type, like a cheap Haiku for boilerplate and an Opus for deep refactoring.

Pricing

The tool itself is free under the MIT license. You only pay for the tokens you actually use with your model provider. On moderate use I usually land under $20/month, on heavier weeks it can go higher. Bring your own key, in the literal sense.

Who is it for?

For tinkerers and technically minded users who want model freedom and full control over API costs. If you like to look under the hood, experiment with various Claude models, GPT models, or local LLMs, and care about data privacy, this is your tool.

My Take

OpenCode is the right call if you already work with several LLM APIs and you don't want to live inside a walled garden. For European users it's a strong pick because there are no geo-blocks, and you can run the whole thing fully offline with self-hosted models if data privacy is a hard requirement.

- Runs on macOS, Windows, and Linux equally well

- Open source under MIT license, no vendor lock-in

- Pick any model provider, local LLMs included

- No geo-restrictions, fully usable in the EU and UK

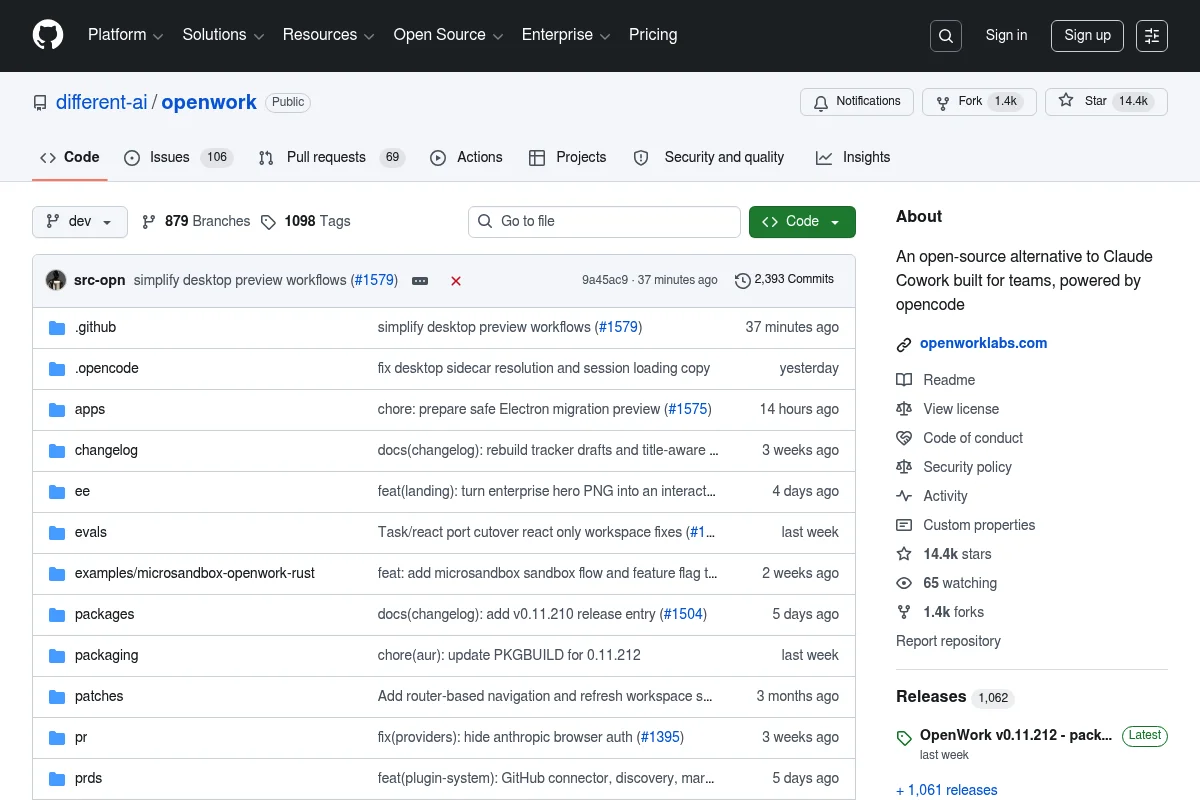

4. OpenWork

What It Is

OpenWork is an open-source AI agent that takes direct aim at Cowork's promise. Sandboxed workflows, Computer Use lite, file operations, and browser control. It's built for people who want a secure Linux environment but don't want to be tied to Anthropic's hardware wishlist.

Platforms

On macOS Apple Silicon, macOS Intel, and Linux, OpenWork runs smoothly. Windows is where things get tricky.

The full sandbox experience is officially limited to the Enterprise variant with a Pro or Enterprise license. So for regular Windows users it's a compromise, not a highlight.

Feature Set

OpenWork wraps the OpenCode world in a Tauri-based GUI plus a dedicated skills manager (installable per project or globally via `opencode.json`), live SSE streaming for sessions, execution-plan visualization on a timeline, and a permission layer. A microsandbox component in Rust isolates risky actions. MCP is passed through OpenCode, so all providers and tools stay available. Multi-agent workflows are missing.

Models

OpenWork inherits the full OpenCode model lineup with 75+ providers. In the GUI you pick the provider and model from dropdowns instead of editing config files. In practice that means Anthropic Claude, OpenAI GPT, Google Gemini, AWS Bedrock, all the specialists, and local Ollama models are a couple of clicks away. For GDPR-conscious setups, this is the most GUI-friendly variant in the comparison.

Pricing

MIT licensed and free. As with OpenCode, you bring your own API key with the model provider of your choice. Because the sandbox layer is lighter than Cowork's, you usually burn fewer overhead tokens too. In practice, that means cheaper day-to-day operation.

Who is it for?

For open-source fans who find a pure terminal too dry but still don't want a commercial cloud product. Many standard workflows ship pre-built, so you become productive faster than with raw OpenCode. Some patience is still required for the initial setup, especially on Windows.

My Take

If sandbox security matters to you and you also want open source, OpenWork is a solid option. On Mac and Linux it works without drama. On Windows, check the docs first to see whether your edition is supported.

- Open source under MIT license, no vendor lock-in

- Sandbox model similar to Cowork's, but lighter weight

- Pick any model provider, fully usable in the EU and UK

- Skill library from the community is growing visibly

5. Factory

What It Is

Factory is a platform that orchestrates multiple coding and workflow agents. Unlike everything else in this lineup, Factory leans into multi-agent workflows where several AI agents work on subtasks in parallel. The company closed a $150M Series C in early 2026, which should keep the roadmap and pace healthy for a while.

Platforms

Factory runs on macOS Apple Silicon, macOS Intel, and Windows 11 (Pro and Home). There's no Linux version, and one isn't on the near-term roadmap either. Linux-only users are out.

Feature Set

Factory's signature is the coordinator droid that decomposes work and dispatches it to specialized sub-droids: Code, Review, Test, Docs, and a Knowledge droid for memory. The official plugins marketplace lets you bundle skills, custom droids, and MCP servers. MCP supports both http and stdio, with 40+ pre-configured servers in the built-in registry. Model-agnostic across Claude, GPT-5, and Gemini.

Models

Factory is explicitly model-agnostic and currently supports Claude Sonnet 4.5, Claude Opus 4.5, GPT-5, OpenAI o3, and Gemini 2.5 Pro. On top of that, open-source models like GLM 4.6, DeepSeek V4, and Qwen 3.6 are available if you want to cut costs or self-host. You can pin a model per droid, like Sonnet for the Code droid and Opus for the Knowledge droid. That model-per-role logic is one of the strongest arguments for Factory on larger codebases.

Pricing

Free tier with limits, paid plans starting at $20/month. So price parity with Claude Pro and ChatGPT Plus. For teams and enterprises there are dedicated tiers with extra security and compliance features.

Who is it for?

For teams and SMBs that want to orchestrate multiple coding and workflow agents in parallel. Also for solo power users who like to experiment with multi-agent setups. The GUI helps you get started, but more advanced droid workflows still take real onboarding time.

My Take

Factory gets interesting if you work on several coding tasks in parallel and want the agents to basically compete with each other. For classic Cowork workflows like research and document work, the other alternatives are closer to the mark. But if you want more than a single-agent tool, Factory is at least worth a look.

Maybe I'm being too harsh, but:

While testing Factory, I kept getting the feeling the marketing copy was running half a league ahead of what actually shows up day to day. Cool to see what's possible. For most Cowork refugees, still not the first tool I'd pull off the shelf.

- Multi-agent workflows are unique in this lineup

- Free tier to try without a credit card

- Solid funding from the fresh $150M Series C

- Full platform support on macOS and Windows

The Comparison at a Glance

Instead of scrolling back through all five sections, here are the key points in one table. Cowork sits at the top as the reference point, so you can see where the alternatives win, tie, or clearly fall short:

Tool | macOS Apple Silicon | macOS Intel | Windows 11 Pro | Windows 11 Home | Linux | Pricing | Audience | Open source | EU/UK fully usable |

|---|---|---|---|---|---|---|---|---|---|

| Claude Cowork | Yes | No | Yes (Hyper-V) | No | No | from $20/month | Non-coders, click-and-go | No | Yes |

| Claude Code | Yes | Yes | Yes | Yes | Yes | from $20/month | Power users + knowledge workers | No | Yes |

| Codex Desktop | Yes | Yes | App yes, Computer Use no | App yes, Computer Use no | No | from $20/month | OpenAI stack outside EU/UK/CH | No | No (Computer Use blocked) |

| OpenCode | Yes | Yes | Yes | Yes | Yes | free (BYOK) | Tinkerers wanting model freedom | Yes (MIT) | Yes |

| OpenWork | Yes | Yes | Paid plan only | Paid plan only | Yes | free (MIT, BYOK) | Open-source fans wanting a GUI | Yes (MIT) | Yes |

| Factory | Yes | Yes | Yes | Yes | No | free + from $20/month | Teams, SMBs, multi-agent fans | No | Yes |

Feature Set Compared

Platforms are one half of the story. The actual feature set is the other. Here's the head-to-head on where each tool stands today (as of April 2026) on skills, plugins, MCP support, provider choice, memory, and multi-agent workflows:

Tool | Skills/Subagents | Plugin marketplace | MCP | Model choice | Persistent memory | Multi-agent | Sandbox | GUI vs. CLI |

|---|---|---|---|---|---|---|---|---|

| Claude Cowork | Yes (skills bundle instructions) | Yes (Sales, Finance, Legal, HR, Engineering preinstalled) | Yes (connectors for data + tools) | Claude only | Per project (no cross-session memory) | Sub-agents via plugins | Yes (VZ/Hyper-V Linux VM) | GUI (desktop app) |

| Claude Code | Yes (4,200+ skills in marketplace) | Yes (official marketplace) | Yes (770+ MCP servers, OAuth) | Claude only (official) | CLAUDE.md + memory directory per subagent | Yes (subagents with own context) | Optional (permissions + hooks) | CLI + IDE plugins (VS Code, JetBrains) |

| Codex Desktop | Yes (skills bundle workflows) | Yes (90+ curated plugins) | Yes (with OAuth, official Codex MCP docs) | GPT + Codex models | Yes (memory preview, blocked in EU/UK/CH) | Multi-tab + subagents | Approval modes (Computer Use) | GUI + CLI |

| OpenCode | Yes (skills + hooks) | Plugin system (no marketplace) | Yes (local + remote, OAuth 2.0) | 75+ providers incl. local Ollama | Per project config | Via hooks + modes | Permission modes | CLI + desktop beta |

| OpenWork | Yes (skills + opencode plugins) | Modular plugin system | Yes (via plugin architecture) | Any (via OpenCode) | Session persistence, per project | No | Microsandbox (Rust) | GUI (Tauri desktop) |

| Factory | Yes (skills + custom droids) | Yes (plugins marketplace) | Yes (http + stdio MCP servers) | Claude, GPT-5, Gemini | Org and user memory + Knowledge droid | Yes (Code, Knowledge, Reliability droids) | Cloud container | GUI + CLI |

The key takeaways from the table:

- Claude Code has the deepest ecosystem as of April 2026: 4,200+ skills, 770+ MCP servers, and an official marketplace. Locked to Claude models.

- Codex Desktop ships 90+ curated plugins, including app connectors (Notion, Linear, Slack, Figma, Box, Atlassian Rovo). Memory and Computer Use remain blocked in the EU/UK/Switzerland.

- OpenCode is the generalist with 75+ supported model providers, local Ollama support, and full MCP including OAuth 2.0. No central marketplace, but community plugins via config.

- OpenWork puts a clean GUI layer on top of OpenCode with skills manager, permission layer, audit trail, and a Rust-based microsandbox.

- Factory is the only tool here with a real multi-agent coordinator (Code, Knowledge, and Reliability droids) plus org and user memory.

- Cowork ships in the Pro plan with skills, MCP connectors, and plugin bundles for Sales, Finance, Legal, HR, and Engineering. No cross-session memory and no open marketplace.

Models Compared

Which models run under the hood, and are you locked to one vendor? That's one of the deciding questions, because it directly affects your API costs, your data-privacy position, and the ceiling on output quality. Here's the overview:

Tool | Default model | Other supported models | Third-party cloud (own account) | Local models | Vendor lock-in |

|---|---|---|---|---|---|

| Claude Cowork | Claude Sonnet 4.6 | Opus 4.7, Haiku 4.5 | No | No | High (Anthropic only) |

| Claude Code | Claude Sonnet 4.6 | Opus 4.7, Haiku 4.5; via gateway: GPT-5, Gemini, Groq, Ollama | Yes (Vertex AI, Bedrock official) | Yes (via LiteLLM gateway) | Medium (officially Claude, workarounds possible) |

| Codex Desktop | GPT-5.5 | GPT-5.4 (fallback), GPT-5.3-Codex-Spark (Pro preview) | No | No | High (OpenAI only) |

| OpenCode | Freely selectable | 75+ providers: Anthropic, OpenAI, Google, Groq, Cerebras, DeepSeek, Mistral, xAI | Yes (Vertex, Bedrock, Azure) | Yes (Ollama, llama.cpp, LM Studio) | None |

| OpenWork | Freely selectable (via OpenCode) | Full OpenCode model lineup with 75+ providers | Yes (via OpenCode) | Yes (via OpenCode) | None |

| Factory | Claude Sonnet 4.5 | Opus 4.5, GPT-5, OpenAI o3, Gemini 2.5 Pro, GLM 4.6, DeepSeek V4, Qwen 3.6 | Yes | Limited | Low (model-agnostic) |

What that means in practice:

- Cowork and Codex Desktop are single-vendor tools. You're locked to the respective ecosystem. That isn't inherently bad (the native models are often the best tuned), but with geo-blocks or compliance requirements it turns into a problem fast.

- Claude Code officially supports Vertex AI and Bedrock. If you sit at a company with a GCP or AWS contract, you can run Claude Code under your own cloud account, which improves both data privacy and cost transparency.

- OpenCode and OpenWork are the most universal tools in the comparison, with real model freedom from cloud down to local.

- Factory positions itself as explicitly model-agnostic and even lets you pick a different model per droid. On bigger setups that turns into a real cost-management tool.

Which Alternative Is Right for You?

Instead of a long recommendation list, three short questions will get you to the right tool in under a minute. First, a cheat sheet with the most common profiles:

If you ... | Pick | Why |

|---|---|---|

| use an Intel Mac or Windows 11 Home and have Claude Pro | Claude Code | Runs on any hardware, included in the Pro plan at no extra cost |

| work mainly on Linux | Claude Code or OpenCode | Both run officially on Linux. Codex and Factory drop out |

| prefer clicking over typing | Codex Desktop or Factory | GUI instead of terminal (Codex without Computer Use in the EU/UK) |

| want open source and vendor freedom | OpenCode or OpenWork | MIT license, bring your own key, local models possible |

| need parallel multi-agent workflows | Factory | The only tool here built around multi-agent out of the box |

| need maximum privacy and full data control | OpenCode with a local LLM | No data leaves your machine, fully usable in the EU |

First question: which hardware do you use?

On Linux, Factory and Codex Desktop are out. One has no Linux version, the other isn't there yet. That leaves Claude Code, OpenCode, and OpenWork. On Windows 11 Home, OpenWork drops out because the full support sits behind the Enterprise variant. And anyone with an Intel Mac on the desk is better off with any of these five than with Cowork.

Second question: how high is your skill level?

If you've never opened a terminal, Codex Desktop or Factory will treat you best, since both ship with a GUI. If CLI doesn't scare you and you want the most powerful tool, Claude Code is the no-brainer. Open-source fans land on OpenCode or OpenWork.

Third question: how much do regional restrictions matter to you?

If you're in the EU, UK, or Switzerland and you actually need Computer Use, Codex Desktop is out for now. The other four alternatives are fully usable. OpenCode and OpenWork add a bonus on top: you can run them with local models if data privacy matters to you.

My standard pick for most readers:

Try Claude Code if you already have Claude Pro or you don't mind the learning curve. For a clean start, the Claude Code guide is a complete walkthrough. To check token and plan costs first, hop over to the Claude Code pricing breakdown. For open-source fans, OpenCode is the best starting point.